These academic journal AI policies aren't going to last

I recently came across the following policy on the submission page of an academic journal:

Use of Artificial Intelligence (AI) tools: One of the goals of Spectrum is to stimulate critical thinking and skill development among authors and reviewers alike. Spectrum discourages the submission of content generated by artificial intelligence (AI)-assisted technologies (such as chatGPT and similar tools). This includes tools that generate text, data, images, figures, or other materials, as well as tools that are used to summarize and synthesize sources. Authors should be aware that such tools are vulnerable to factual inaccuracies, biases, and logical fallacies, and may pose risks to privacy, confidentiality, and copyright.

If authors choose to submit work created with the assistance of AI tools, such use must be disclosed and described in the submission. The disclosure must include: 1) what system was used, 2) who used it, 3) the time/date of the use, 4) the prompt(s) used to generate the content, and 5) the content in the submission that resulted from use of AI tools. The output from the AI system should also be submitted as supplementary material. Authors must accept full responsibility for the accuracy and integrity of the submission. AI systems do not meet the criteria for authorship, and should not be listed as a co-author.

(None of the following should be taken as criticism of this specific journal, which happens to be student-run. Their statement is an adaptation of this one by the Journal of the Medical Library Association, but much more concise and thus possible to quote in full.)

I do not believe these policies are going to last. First, the policy is unclear. Is code generation covered by “tools that generate […] figures, or other materials”? I haven’t checked, but I doubt any of the published papers in this journal include screen recordings of the author’s IDE sessions or complete GitHub Copilot logs as supplementary material. What about using AI tools to review your work, comment on clarity, soundness, and style, and to catch mistakes? Should these sessions be captured and reported as well, even if the generated text isn’t directly used?

Going forward, AI will be increasingly integrated into the tools academics use for coding, writing, reviewing, and referencing. If these AI-use policies remain unreasonably restrictive, then they are simply going to be ignored as AI tools become ubiquitous. There is no benefit to transparency if authors are forced to choose between misreporting their process or keeping a meticulous log of their routine AI assistance workflow just to submit to your journal.

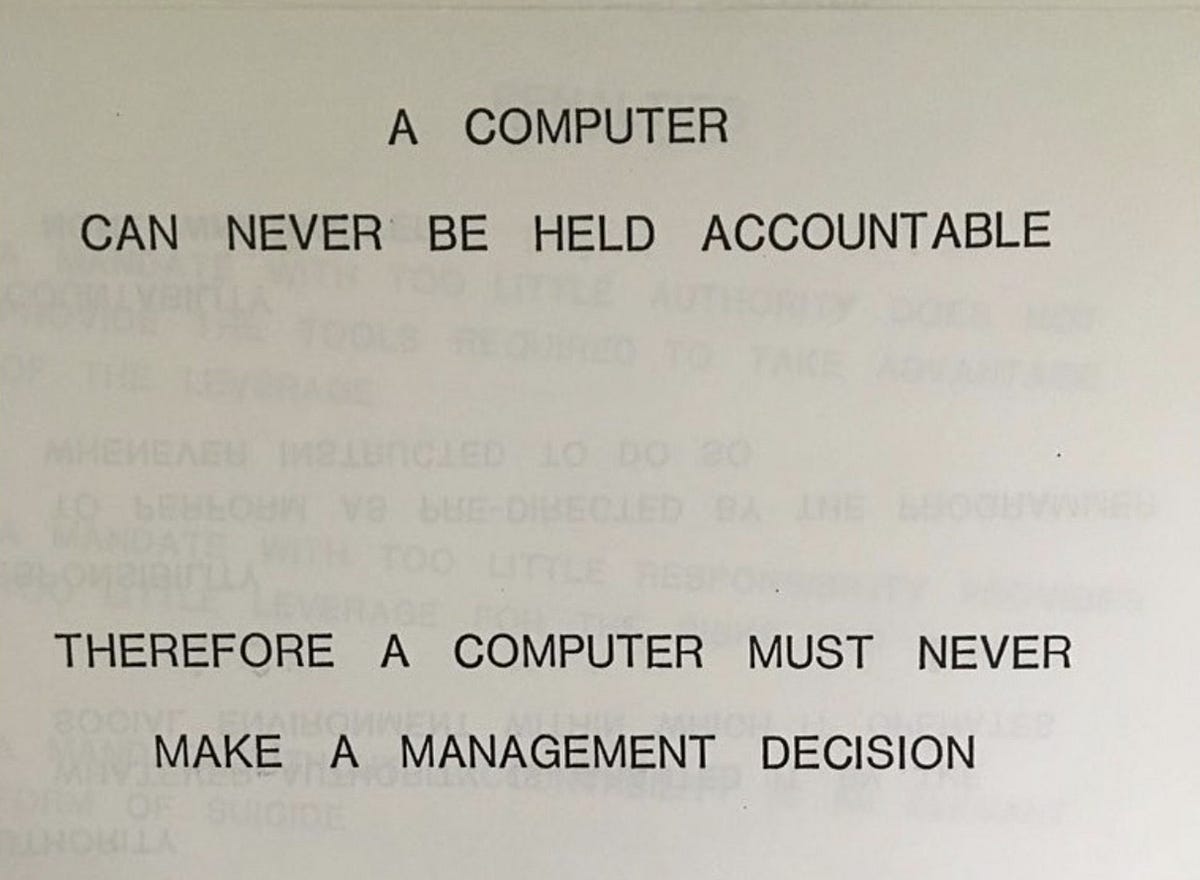

Sooner or later, academic AI-use policies will have to adapt to meet our rapidly changing reality. There is a reasonable middle ground here: no matter how a piece of text or code came to be, the author is always responsible for it. Disclosures should focus on substantive intellectual contributions, the kind that would meet traditional co-authorship norms. In this case, it makes sense to acknowledge the use and identity of the relevant tools.