New Zealand and Australia are really far apart

I think I had the general impression that New Zealand basically hugged the southeastern coast of Australia, but in fact the Kiwis are quite a bit further away from Australia than I thought. The closest points between New Zealand and Tasmania are almost 1,500 km apart (and mainland Australia is even further away).

This is about the same as the straight line distance between Toronto and Winnipeg (for Canadians), Atlanta and Boston (for Americans), or London and Warsaw (for Europeans).

The two closest major cities (i.e., cities everyone would know) are even further apart: Auckland to Sydney is about 2,150 km! This is like Toronto to St. John’s, Newfoundland, Los Angeles to Kansas City, or Rome to Helsinki.

Map by DI2000 (CC BY-SA 4.0).

How a nuclear power plant became a haven for wildlife · ↗ www.smithsonianmag.com

This Smithsonian Magazine article by Brigit Katz recounts how the American crocodile in Florida, whose numbers had dwindled to fewer than 300 by the 1970s, recovered in part due to the Turkey Point Nuclear Generating Station. The warm and relatively isolated waters of the power plant’s cooling canals are suitable for nesting and attract not just crocodiles but other wildlife, too.

It’s always fascinating to see how nature can survive and even thrive in man-made habitats. One of my favourite examples is Toronto’s Leslie Street Spit (Tommy Thompson Park), an important bird sanctuary entirely on reclaimed land—literally a rubble peninsula.

Hat tip to SkaldCrypto on Reddit.

Causation does not necessarily imply correlation

Debate any subject with an empirical angle and you will inevitably run into the phrase “correlation does not necessarily imply causation”. While true, it is rarely an interesting observation, and quite often used to reflexively dismiss empirical evidence countering one’s viewpoint (even if this impulse is ultimately correct much of the time). As investor Paul Graham amusingly put it:

Whenever I see a reply mentioning that correlation isn’t causation, without fail it turns out to be saying something stupid. If they made a great seal of midwits, that phrase would be inscribed around the outer edge.

It is more interesting to note another bias making causal claims in research difficult: the fact that causation does not necessarily imply correlation, especially when human actors are involved. Economist Scott Cunningham has a great illustration of this at the beginning of his book Causal Inference: The Mixtape:

But weirdly enough, sometimes there are causal relationships between two things and yet no observable correlation. Now that is definitely strange. How can one thing cause another thing without any discernible correlation between the two things? Consider this example, which is illustrated in Figure 1.1. A sailor is sailing her boat across the lake on a windy day. As the wind blows, she counters by turning the rudder in such a way so as to exactly offset the force of the wind. Back and forth she moves the rudder, yet the boat follows a straight line across the lake. A kindhearted yet naive person with no knowledge of wind or boats might look at this woman and say, “Someone get this sailor a new rudder! Hers is broken!” He thinks this because he cannot see any relationship between the movement of the rudder and the direction of the boat.

…

Eroom's law · ↗ en.wikipedia.org

Eroom’s law (Moore’s law backwards) is a term coined by Jack Scannell et al. in 2012 to describe why drug discovery has become slower and more expensive over time. As summarized in the Wikipedia article, there are four primary causes proposed:

- The ‘better than the Beatles’ problem: Many conditions already have successful therapies and improvements over these existing drugs are likely to be modest(whereas the earlier drugs were often compared against placebos).

- The ‘cautious regulator’ problem: High-profile failures of drug regulation such as Thalidomide and Vioxx have are making regulators ever more risk-adverse.

- The ’throw money at it’ tendency: The default response to difficulties in drug discovery is to add resources, leading to cost overruns.

- The ‘basic research–brute force’ bias: Basic research has shifted toward high-throughput methods that may be nonetheless less productive (or at least overestimated in their effectiveness) than classical methods for discovering drugs that actually end up working in patients.

An additional idea (related somewhat to point #1) is that a lot of the low-hanging fruit has already been picked. While it is a somewhat circular argument, it is intuitive that drug discovery is harder because we’ve already found many of the drugs that were easy to discover.

Speaking to the broader slowdown in meaningful scientific progress (at least relative to the volume of academic outputs such as journal articles), I recall somewhat once made a similar point about the low-hanging fruit, like relatively, having already been picked. Not that relatively was easy to discover, but the point is you can only discover it once!

Maduro raid soldier arrested for insider trading on Polymarket for $400,000 score · ↗ www.cnn.com

The anonymous Polymarket trader that made over $400,000 in profit betting on Maduro’s ouster has been allegedly unmasked as special forces soldier Master Sgt. Gannon Ken Van Dyke. Van Dyke was a participant in the raid that captured the former president of Venezuela in early January. He now “faces five criminal charges for stealing and misusing confidential government information, theft and fraud.” The Commodity Futures Trading Commission, which asserts jurisdiction over prediction markets in the United States, has also filed a related civil complaint against the active duty soldier (the first such insider trading case involving prediction markets!).

We have previously discussed on this blog how prediction markets incentivize bad behaviour. The goal aggregating diffuse knowledge to produce unbiased forecasts is a lofty one, but in practice we get gambling, insider trading, and sometimes outright hostile/antisocial actions to make a bet happen.

To some, insider trading is a bug, not a feature. To quote Coinbase CEO Brian Armstrong on the subject: “If you’re actually optimizing it for a source of news, you 100% want insider trading.” (He uses the example of an admiral sitting in the Suez Canal making a bet based on military intelligence.) Is it worth knowing about events just before they happen if the mechanism is that retail traders (gamblers) get soaked over and over again?

…Even the most expensive law firms are filing AI slop · ↗ www.lawyer-monthly.com

Sullivan & Cromwell, one of the world’s most expensive law firms, has been caught submitting hallucinated legal citations as part of a routine bankruptcy case. It’s hardly the first time an American law firm has been caught doing this; researcher Damien Charlotin has already documented over 900 instances in the US alone.

I’m bit surprised the legal profession hasn’t uniformly adopted automated checkers by now (at the very least for hallucinated case names and quotes, interpretation is obviously harder), when the reputational damage of these errors is so significant. It seems like an obvious and achievable step for a famously conservative and detail-oriented profession. In fact, the aforementioned Damien Charlotin seems to have developed such a service himself, and I’m sure competitors exist.

ggsql: A grammar of graphics for SQL · ↗ opensource.posit.co

This is pretty darn interesting new release from Thomas Lin Pedersen and team at Posit (the company behind RStudio): ggsql, a SQL-fied take on the grammar of graphics approach to data visualization made famous by ggplot2. As a veteran ggplot user myself, I will definitely be checking it out. For production-ready plots, I am not sure if it will be easier to fiddle with syntax for things like label sizes and axis ticks in SQL rather than R, but for the exploratory phase of data analysis, I can immediately see the appeal.

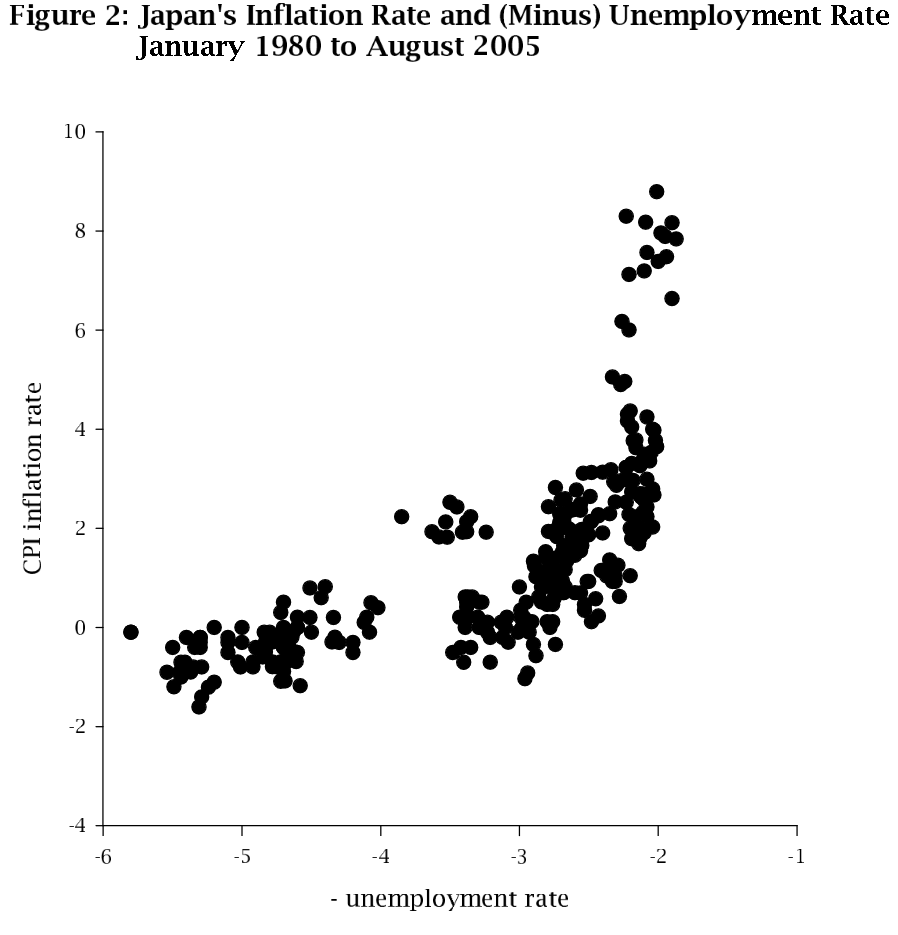

Japan’s Phillips Curve Looks Like Japan · ↗ qed.econ.queensu.ca

Today’s post is a fun one: a working paper from 2006 entitled “Japan’s Phillips Curve Looks Like Japan”.

And indeed, it does:

(Well, as long as you reflect the plot across the y-axis, notice the plot is of -x rather than x on the x-axis.)

The Phillips curve describes the observation that inflation and unemployment have an inverse relationship in the short term (i.e., as unemployment falls, inflation rises and vice-versa).

This humorous working paper did actually lead to a full publication with the same name in 2008:

During the past 15 years Japan has experienced unprecedented, high unemployment rates and low (often negative) inflation rates. This research shows that these outcomes were predictable as part of a stable, readily recognized Phillips curve.

There is a well-known joke in economics attributed to Nobel laureate Simon Kuznets that goes something like this: “There are four types of economies: developed, underdeveloped, Japan, and Argentina.”

I guess this is one way in which Japan’s economy is very much like the rest of the world’s (at least up to 2005).

…Encouraging results for mRNA therapy for pancreatic cancer · ↗ www.nbcnews.com

Cancer therapies based on mRNA vaccine technology have been among the most promising medical developments of the past decade. That promise is now beginning to show early signs of being realized. An extended follow-up of a phase 1 pancreatic cancer trial published last year reported striking outcomes for some patients:

Six years after treatment, Gustafson and six others who responded to the treatment are still alive, along with two of the eight people who did not respond. Two of the responders, including the one who died, had a cancer recurrence; Gustafson’s cancer has not come back.

In other words, after six years, 7/8 responders are still alive, while only 2/8 non-responders are.

Pancreatic cancer is a particularly aggressive form of cancer, with a 5-year relative survival rate of just 13%. Famously, it was the type of cancer that killed Steve Jobs. It has long been an intense target for research due to its grim prognosis and lack of progress compared to other forms of cancer.

At the same time, this remains very early evidence from a small group of patients. Phase 1 clinical trials are not primarily designed to evaluate efficacy (rather, they are designed to assess safety and establish dosing and side effects). While the difference between responders and non-responders is striking, it does not by itself show the vaccine caused the survival benefit: “responders” are defined after treatment, so they are not a proper control group.

…Is the pendulum swinging back on free-range childhood? · ↗ bigthink.com

Stephen Johnson reports in Big Think on a movement in the United States to end the fear that parents have of having Child Protective Services called on them for giving their young children some independence to roam their neighbourhoods unsupervised. In recent years, there have been several high-profile cases of parents investigated for neglect for allowing their children freedom of movement that would be considered utterly routine two decades ago.

There are many articles decrying the “helicopter parents” of today, who never let their children out of their sight. But this is rational behaviour when vague laws regarding childhood endangerment/neglect create a climate of fear: even if most people believe allowing kids independence is reasonable, all it takes is one complaint and one sympathetic social worker to create dire consequences for an entire family. This is what activists in the United States are trying to change:

The case helped persuade Georgia legislators to pass a so-called “reasonable childhood independence” (RCI) law, enacted last summer. These laws are part of a national movement to tighten vague language in states’ neglect laws. Georgia’s old law, for instance, defined neglect as the failure to provide “proper” parental care. The new law replaces that with “necessary” care and sets a higher bar for neglect: Parents must demonstrate “blatant disregard” for their child’s safety — putting them in imminent, obvious danger. The law also explicitly states that allowing a reasonably capable child to walk to school or travel to a nearby park unsupervised does not, by itself, constitute neglect.

…

The surprising origin of the citation system controlling academia · ↗ davidoks.blog

David Oks wrote a provocatively titled post a few weeks ago: “How citations ruined science”.

He begins by observing the tidal wave of AI slop in scientific publishing, musing:

But there’s something about all of this that puzzles me.

I get why students, for example, would want to avoid doing homework. But I don’t really understand why scientists would want to avoid doing science. Or, rather, why they’re so eager to use AI to produce a huge number of shoddy papers. No one forced them to become scientists. I imagine that most people who work as scientists chose to do so out of something like love for the subject. So why are scientists using AI to produce and submit so much garbage?

As an aside, this reminded me a bit of writer Freddie deBoer’s piece “If You Don’t Like Writing, Do Something Else” from a few years ago:

For as long as I can remember, these complaints - writer’s block, imposter syndrome, procrastination - have been key elements of writerly self-deprecation. They’re ubiquitous. And, in a sense, the author is correct to suggest that these are tools for identifying those humans who define themselves as writers. Get writers together in a room and soon they’ll be competing to be the one who likes writing the least. But none of it ever meant anything to me.

…

Fake stars are rampant on GitHub · ↗ arxiv.org

This article “4.5 Million (Suspected) Fake Stars in GitHub: A Growing Spiral of Popularity Contests, Scams, and Malware” (originally posted late 2024) by He et al. has been doing the rounds lately. It exposes the rampant fraud in the GitHub “star” system, which it apparently taken quite seriously in some corporate circles (I’ve never thought of stars as anything more than a personal bookmark). Their search for fraudulent activity involved querying GHArchive, an archive of all public GitHub events, for data between 2019 and 2024.

A few of their main findings are as follows:

- There was a two order-of-magnitude increase in fake stars in 2024. At the peak in July 2024, their program detected (suspected) fake star campaigns for nearly 16% of repos with ≥50 stars in that month.

- Most of these repos were for short-lived malware repositories disguised as unsavoury software like crypto bots, game cheats, and pirating software. The purpose of other repos was unclear.

- The majority (60%) of suspected users participating in fake star campaigns had little to no organic activity patterns.

- Fake star campaigns had a small positive effect on attracting real stars for the first two months, but afterward two months they had a negative effect.

See further discussion of this article on Hacker News.

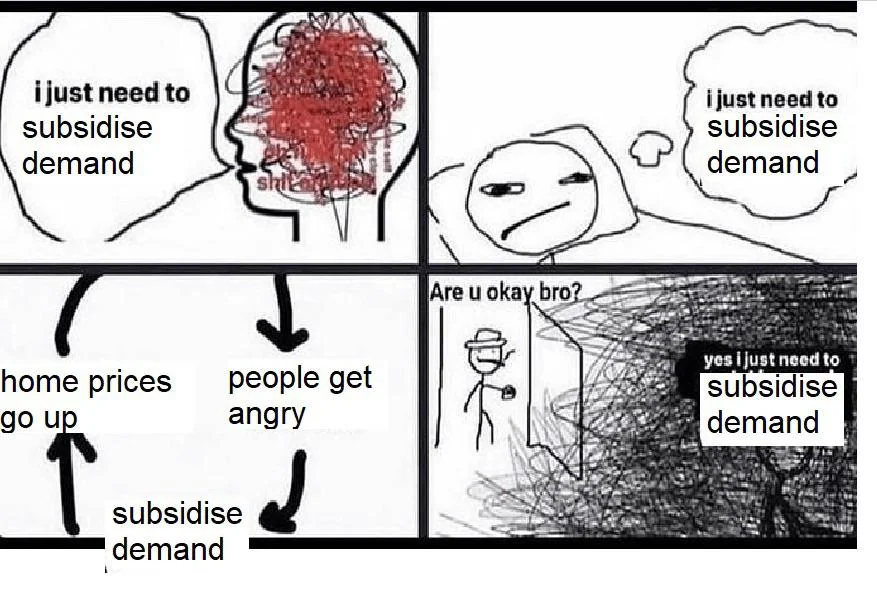

Why you can't just subsidize demand to end Canada's housing crisis · ↗ www.cmhc-schl.gc.ca

Mathieu Laberge, Chief Economist at the Canada Mortgage and Housing Corporation, has a good article out today on why you can’t subsidize your way out of Canada’s housing affordability crisis: simply helping potential homeowners with their mortgage payments ends up raising house prices for everyone. This in turn raises the price-to-income ratio for housing and deepens the housing affordability crisis overall. To avoid this outcome, you need policies to promote homebuilding and increase the housing supply beyond projected levels.

A good following on housing policy in Canada, particularly on supply-side interventions, is economist Mike Moffatt of the Missing Middle Initiative.

McDonald’s used to put the vaccine schedule on tray liners · ↗ www.propublica.org

From ProPublica’s new article on RFK Jr.’s anti-vaccine agenda, a throwback to the era before vaccines became controversial in the United States (first on the left and now on the right):

Vaccines, for decades, weren’t politically divisive. They were so uncontroversial that McDonald’s restaurants in the 1990s put the childhood immunization schedule on their tray liners.

Vaccines used to be a unifying issue with broad, bipartisan support:

When the nation’s immunization program was in trouble in the 1980s, Republicans and Democrats stepped in to save it.

An example of vote (in)efficiency in Quebec

I came across a remarkable contrast in vote efficiency in one of political analyist Patrick Déry’s recent newsletters: specifically, the case of the Parti Québécois in 1973 versus today. In the 1973 Quebec general election, the sovereignist party won just 6/110 seats in the province’s National Assembly with 30% of the vote. Today, according to projections, the party has a good shot of winning a majority with just 31% in the polls. A huge gain in vote efficiency, albeit one won over the course of half a century.

Adjusting for recalled past vote in political polling · ↗ abacus-weighting.com

The founder of Abacus Data, a Canadian polling firm, dropped kind of an interesting URL yesterday: abacus-weighting.com. It’s a advertisement in the form of a case study on why Abacus weights their political polls on past vote. It fits perfectly with the theme of yesterday’s post on how pollster’s get different results from the same data (the answer is they weight the raw data differently).

If you follow Nate Silver (or American political polling in general), you probably know that pollsters undercounted Trump support in all three elections where he was on the ballot. What I learned from this post is that support for the Conservative Party of Canada has been underestimated in their firm’s polling data in every polling wave for every election since 2011:

In every single wave, across every single election cycle, Conservative voters are underrepresented in our demographically weighted sample relative to their actual share of the vote. Not in most waves. Not in some elections. In every case we can observe.

Weighting for recalled past vote improves the estimate in every case, sometimes dramatically so:

In every election, past vote weighting moved our Conservative estimates upward and our Liberal estimates downward — consistently in the direction of the actual result. The 2021 election shows the most dramatic correction: a 7-point improvement in our Conservative estimate.

…

How do pollsters get different results from the same data? · ↗ www.nytimes.com

Nate Silver linked to this throwback article from 2016 in The New York Times in his recent article on fake AI polls, which I also wrote about a few days ago. The article, entitled “We Gave Four Good Pollsters the Same Raw Data. They Had Four Different Results.” is a good reminder that modern polling diverges very far from the theoretical ideal of a simple random sample. Even after deciding on a methodology to sample participants and collecting the data, a lot of work goes into interpreting raw poll responses to give us top-line polling numbers. Every pollster needs to figure out how to weight the responses they get, since poll response rates are abysmal and variable across different demographic groups. As in the example given in this piece, these choices can result in large differences in those top-line numbers: from +4 Clinton to +1 Trump, all from the same raw data!

For an interesting follow-up: “Polling is becoming more of an art than a science”, also on Nate Silver’s Substack.

Scientists invent a fake disease, AI picks it up, other scientists cite it · ↗ www.nature.com

A somewhat disturbing bit of reporting from Nature tells the story of bixonimania, a fake eye disease invented by Swedish medical researcher Almira Osmanovic Thunström and her team. She seeded the idea for the fake disease in a series of ridiculous, joke-filled blog posts and preprints in mid-2024.

Because AI can be overly credulous with its sourcing (how often do Google’s AI answers confident cite random Reddit posts for the bulk of an answer?), the disease got picked up as an “emerging term” by the leading chatbots. The preprints even got cited a handful of times in real publications, which is further evidence that scientists don’t read the papers they cite (I guess the modern equivalent of copying citations from other papers is having AI dredge the literature for you).

I can see AI agents being exploited by those pushing dubious medical diagnoses to flood the Internet and preprint servers with articles aimed at convincing LLMs of the validity of their positions. That is if the agents aren’t too busy spinning of websites to defame those who incur their wrath.

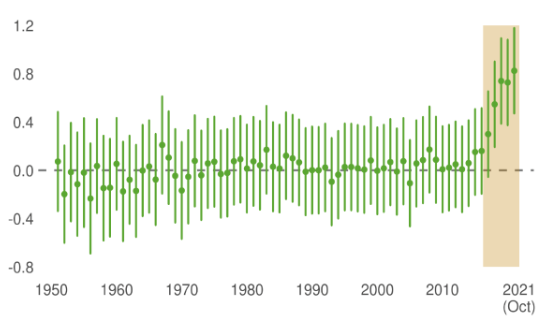

A data point against the idea that AI will freeze/homogenize culture · ↗ arxiv.org

Here’s an interesting figure and accompanying passage from this 2023 preprint entitled “Machine Culture”:

The innovations generated by AlphaGo and AlphaGo Zero soon entered human culture, as shown by research comparing human gameplay before and after the algorithms’ introduction. The decision quality, as measured by an open-source variant of AlphaGo Zero, showed very little improvement in human gameplay from 1950 to 2016, followed by a sudden improvement after the introduction of AlphaGo in March 2016. However, this improvement wasn’t solely due to humans adopting strategies developed by AlphaGo. It also reflected an unexpected shift, wherein humans started developing moves that were qualitatively distinct both from previous human moves and from the novel moves introduced by AlphaGo. In summary, AlphaGo served as an early, quantifiable exemplar of machine culture, generating novel cultural variations through genuine, nonhuman innovation. This was followed by a major transition into an even broader range of traits as the result of humans building on the previous discoveries made by machines. As the methods underpinning AlphaGo have been generalized to other games and extended to scientific problems, we anticipate a continued infusion of machine-generated discoveries across diverse domains of human culture.

…

AI makes it easier to generate fake papers, too · ↗ tylervigen.com

Here’s a fun project from Tyler Vigen, creator of the famous Spurious Correlations page (which has been cited as a cautionary tale in many a science class). Using his database of real but spurious correlations (created by calculating the Pearson correlation coefficient r between a very large number of variables and picking out the hits), he used AI to create amusing fake manuscripts expounding on these statistical flukes as if they were real research questions.

These papers were generated in January 2024, and as previously discussed on this blog, the pipeline for end-to-end paper generation has come a long way in two years. I have no doubt Tyler could make these paper’s sound much more convincing using today’s models, though of course his goal here is to make you laugh (and think), not to trick you. But I have no doubt there will be many scholars adopting this data dredging strategy to generate “real” papers, contributing to a deluge of papers flooding the academic publishing system.

What is a public opinion poll without the public? · ↗ www.nytimes.com

A few days ago, two professors (Leif Weatherby and Benjamin Recht) published an opinion piece in the New York Times calling attention to Axios publishing a story on maternal health using invented polling results:

A recent Axios story on maternal health policy referred to “findings” that a majority of people trusted their doctors and nurses. On the surface, there’s nothing unusual about that. What wasn’t originally mentioned, however, was that these findings were made up.

Clicking through the links revealed (as did a subsequent editor’s note and clarification by Axios) that the public opinion poll was a computer simulation run by the artificial intelligence start-up Aaru. No people were involved in the creation of these opinions.

The piece goes on to argue that this so-called “silicon sampling” is seductive because good public opinion polling is expensive, hard to do, and still prone to bias. But this shortcut magnifies the the problem of bias rather than solving it.

I’ve read a little bit about this strategy of using LLM-generated survey participants in the context of social science research in a series of posts (mostly from Prof. Jessica Hullman) over on Andrew Gelman’s blog:

- Validating language models as study participants: How it’s being done, why it fails, and what works instead (2025-12-19)

- Survey Statistics: Thomas Lumley writes about Interviewing your Laptop (2025-08-26)

- When does it make sense to talk about LLMs having beliefs? (2025-08-15)

- Better and worse ways to mix human and LLM responses in behavioral research (but you still have to figure what you’re measuring) (2025-06-12)

- LLMs as behavioral study participants (2025-05-29)

Silicon sampling seems moderately interesting from a research perspective, but I can’t help but agree with the New York Times opinion piece authors that this will be ruinous for the already waning trust in public opinion polling. If you didn’t bother to ask the public, then why should the public care what you “find”? I think there is probably a lot of utility in using LLM samples to aid in designing and validating surveys, though.

Social media is a freak show · ↗ www.natesilver.net

I quite enjoyed Nate Silver’s recent Substack post “Social media has become a freak show” (curiously, the title element of the page is “Social media is turning into a freak show”—I think the transformation has already occurred).

Nate Silver is still a Twitter power user, and yet even he acknowledges the increasing uselessness of Twitter for driving traffic to his newsletter or even just providing a forum for thoughtful engagement. I myself abandoned the platform a few years ago, having seen the direction it was heading under Elon Musk. My impression is that the utility of Twitter in most domains is asymptotically approaching zero, with a handful of exceptions (I will occasionally lurk for AI news, as the discussion is still robust, if polluted with a ton of low-quality bot or bot-like replies).

The rest of the social media ecosystem isn’t much better. Bluesky has declining engagement, probably because it has replicated Twitter’s old schoolyard dynamics on steroids. Facebook hasn’t been relevant for years, and I have no idea what it’s even for anymore if not connecting with your friends (I haven’t had an account in many years). Instagram might still be fun, though I have no idea because I’ve never used it. But it’s certainly not a place where “the discourse” happens.

…How effective are Amber alerts? · ↗ www.mcgill.ca

A few weeks ago, I experienced a situation familiar to many Canadians, described in this article from Jonathan Jarry of McGill University’s Office for Science and Society:

On Sunday, March 22nd of this year, a large swath of the population in Quebec was woken up at 4:25 as cell phones lit up and screamed. An Amber alert had been broadcast. Less than four hours later, the two missing children were thankfully found, unharmed, and the alert was cancelled.

Thankfully, my iPhone respects silent mode and only vibrated forcefully, but apparently not all phone brands respect this setting. Unlike in the United States, Amber alerts to cell phones in Canada cannot be disabled.

The statistics regarding child abductions and Amber alerts discussed in this article are equal parts comforting and disconcerting. For example, most children who are the subject of an Amber alert are recovered unharmed:

a study published a decade ago and looking at 448 Amber alerts in the U.S. revealed that over 95% of the children had been recovered alive and nearly 90% recovered alive and without physical harm, sexual abuse, of withholding of needed medical care during the abduction. Even when Amber alerts don’t trigger a helpful tip, the child is usually found.

Other research from the United States indicates the Amber alert plays a part in the recovery about 25% of the time. However, they may be issued too late to prevent the worst outcomes:

…The definition of "agent"

An interesting exchange between Guido van Rossum and Andrej Karpathy a few days ago on Twitter:

Guido van Rossum: I think I finally understand what an agent is. It’s a prompt (or several), skills, and tools. Did I get this right?

Andrej Karpathy: LLM = CPU (data: tokens not bytes, dynamics: statistical and vague not deterministic and precise) Agent = operating system kernel

The triumph of the data raccoons

My PhD co-supervisor at the University of Toronto, Dr. David Fisman, liked to use the term “data raccoon” to describe the work of using messy, incomplete, hard-to-work-with data to do serious research. Or, as he described it in testimony to the Canadian House of Commons in May 2020 (emphasis mine):

I’ll tell you, my group at University of Toronto call ourselves “data raccoons”, because we’ve sort of managed to thrive for about 15 years on data that most people regard as garbage, so it’s sort of a bit of the normal state of affairs for us with public health data analysis.

It’s an unmistakably Toronto metaphor—the city isn’t called the raccoon capital of the world for nothing!

But now the data raccoons have gone and taken over the world. The basis of the AI revolution is vast quantities of text dredged from the Internet, almost none of it written for the purpose of training the deus ex machina.

Arguably the most important dataset for training LLMs has been Common Crawl, a mostly uncurated archive of the web that has been running since 2007. According to a Mozilla report from 2024, Common Crawl was used in two thirds of LLMs developed in the formative period between 2019 and 2023, and the archive also comprised 80% of the tokens in OpenAI’s GPT-3. Unsurprisingly, the Common Crawl Foundation has received financial support from AI companies in recent years, while also facing accusations that it helped those same companies train their models on paywalled articles.

…Andrew Gelman's blog schedule

Andrew Gelman, professor of statistics at Columbia University, runs one of my favourite blogs on the Internet. He has been writing there for over 21 years, since October 2004. Many of his collaborators also contribute to the blog, but he is the primary author. In a 2024 post celebrating 20 years of blogging, Gelman mentions having over 12,000 posts. This is a cadence of over 1.6 posts/day sustained for two decades!

One of the more unusual things about Gelman’s blog is that most posts are not particularly topical. Sure, many posts are time-sensitive, posting about upcoming events or commenting on recent publications (like doing damage control on deeply flawed papers like to receive attention). But there is generally one non-topical post each day. A line in a recent post caught my eye:

As regular readers know, our posts are usually on a 6-month lag, but this one is so important I had to share it with you right away.

As a regular reader myself, I was aware of the delayed posting schedule, but out of curiosity, I wanted to see how far back this habit went. Here’s the rough timeline I came up with:

- In 2011, Gelman wrote that his “non-topical blog entries are on approximately one-month delay”.

- In 2012, he referred to “stacking up posts here with a roughly one-month delay”.

- In 2014, he said that “most of the posts here are on a 1 or 2 month delay.”

- In 2016, he casually mentioned “our 2-month delay”.

- Later that year (August 2016), in a post literally titled “My next 170 blog posts”, he said he had filled “the blog through mid-January” and had “170 blog posts in the queue.”

- By 2018, he mentioned the blog was “mostly on a six-month delay”.

- In 2019, he referred to “our 6-month blog delay.”

- In 2022, he wrote: “Usually I schedule these with a 6-month lag, but this time I’m posting right away”.

- In February 2026, he said the “current end of the blog queue is in early July”.

- Then, in April 2026, came the latest “usually on a 6-month lag” remark.

It seems the blog had about one month of content in the publishing pipeline by 2011, ramped up to one to two months by 2014, two months by early 2016, and finally jumped to six months by August 2016, where it been ever since. Quite the arsenal of scheduled content!

…Testing ZeroClaw, Part 2.5: ZeroClaw is alive!

Yesterday, I wrote about how the ZeroClaw GitHub repository had been down for two days with little explanation. Earlier today, the project provided a little more information on Twitter:

They flagged our org which is why we’re down. Code is safe and we’re still working, just waiting for @github

Since March 30 (the day after their repo started 404ing), they project has been promising a blog post to explain the situation. As of now, that post is now available:

Over the past few days, a maintainer used aggressive AI automation to review and merge PRs:

- Merges went through that shouldn’t have.

- In the process of trying to undo the damage, the maintainer’s GitHub account was flagged, which triggered enforcement actions on the ZeroClaw org itself.

- That maintainer has been removed from the project.

This sounds strikingly similar to the incident that occurred about a month ago, which I also mentioned in yesterday’s post:

Earlier today, during routine maintenance, the visibility of the `zeroclaw-labs/zeroclaw` repository was accidentally changed from public to private and was later restored to public.

After reviewing the GitHub API audit logs and collecting detailed feedback from our engineers, we confirmed that the incident was caused by improper use of an AI agent tool during maintenance.

Obviously, the use agentic workflows in open source projects is an emerging field where best practices have not yet been established. The case of ZeroClaw should be a warning to other projects to keep human review in the loop, or at least to limit the autonomy of agents when a project has numerous contributors. As they say in their blog post:

…Testing ZeroClaw, Part 2: ZeroClaw is dead?

Earlier this month, I wrote about setting up one of the many lightweight OpenClaw alternatives, namely ZeroClaw. I had some issues with initial setup, but I got to the point where I could talk with my bot over Telegram.

Some of my initial enthusiasm for ZeroClaw was dampened by the divergence between the docs and the features available in the release build. The release build was quite out of date due to the breakneck pace of development. In the week or two following my initial setup, the release build pipeline was broken, so even when they released a new tag, there were no new precompiled binaries available. Being forced to compile the Rust binary yourself kind of goes against the project’s philosophy of ultra-low resource consumption.

They eventually fixed the release pipeline and I started casually working on a system where I could send notes and ideas for blog posts to my bot through Telegram and have it turn them into structured Markdown files.

But two days ago (March 29), I noticed that the ZeroClaw GitHub repo was 404ing. On the same day, the project posted the following on Twitter:

Our GitHub repo is currently returning a 404 for some users. We’re aware and actively investigating. The repo is public and all code is safe.

…

One important fact about for-profit plasma donation

For-profit plasma donation is in the news today in Canada. Two people recently died after giving plasma at Grifols for-profit plasma clinics in Winnipeg, Manitoba, although Health Canada has yet to find a link between the plasma collections and the deaths. Today, it was reported that a Grifols clinic in Calgary, Alberta was found non-compliant during an inspection in December 2025:

The inspection found the Calgary centre didn’t accurately assess whether donors were suitable, didn’t collect blood according to its Health Canada authorization, didn’t thoroughly investigate errors and accidents, and didn’t carry out sufficient corrective and preventative actions.

This is obviously a problem for for-profit plasma collection in Canada, where the practice is already controversial. Paid plasma collection is illegal in Canada’s three largest provinces: Ontario, British Colombia, and Quebec, though Ontario allows a few for-profit clinics to operate through an agreement with Canadian Blood Services, Canada’s independent blood authority. British Colombia and Quebec together make up over 35% of Canada’s population; including Ontario, it’s nearly 80%. Besides Ontario, for-profit clinics exist in some other smaller provinces.

Vocal advocacy exists against paid plasma collection, leading to municipal resolutions against the practice in Ontario, even as clinics open. This advocacy is often premised on the fear that paid plasma will undermine voluntary donations. To my mind, the central fact in the for-profit plasma collection debate is that only a handful of countries are self-sufficient in plasma collection, and all of them allow for paid plasma collection. They are: the United States, Germany, Czechia, Austria, and Hungary (Egypt may have also recently joined the list). While other countries, like Canada, may have achieved self-sufficiency for plasma for direct infusion, no other country can meet its own needs for plasma-derived medical products. The world relies on a small number of self-sufficient countries, primarily the United States, to meet the demand for plasma products.

…How to avoid cognitive surrender to AI · ↗ alexpanetta.substack.com

I am sharing a thoughtful article today from Alex Panetta’s A.I. For You on avoiding over-reliance on AI: “cognitive debt”, “epistemic debt”, or “cognitive surrender”.

A particularly interesting nugget regarding the “Your Brain on ChatGPT” article from the MIT Media Lab (yes, that MIT Media Lab):

The paper is even written to get LLMs to read it carefully. The paper carries instructions telling LLMs which section to read first, which appears to be a clever way to force relevant context atop the context window, as LLMs tend to best remember the beginning and end of conversations — not the middle.